AI Chatbots in Web Shops, Part 2

In the first part of this series, we explored why so many chatbot solutions in e-commerce fail to meet user needs. Without genuine integration into the provider’s product and brand world and deep technical embedding into the website, they cannot replicate the multi-layered process of expert consultation. The result: frustrating dialogues and lost customers.

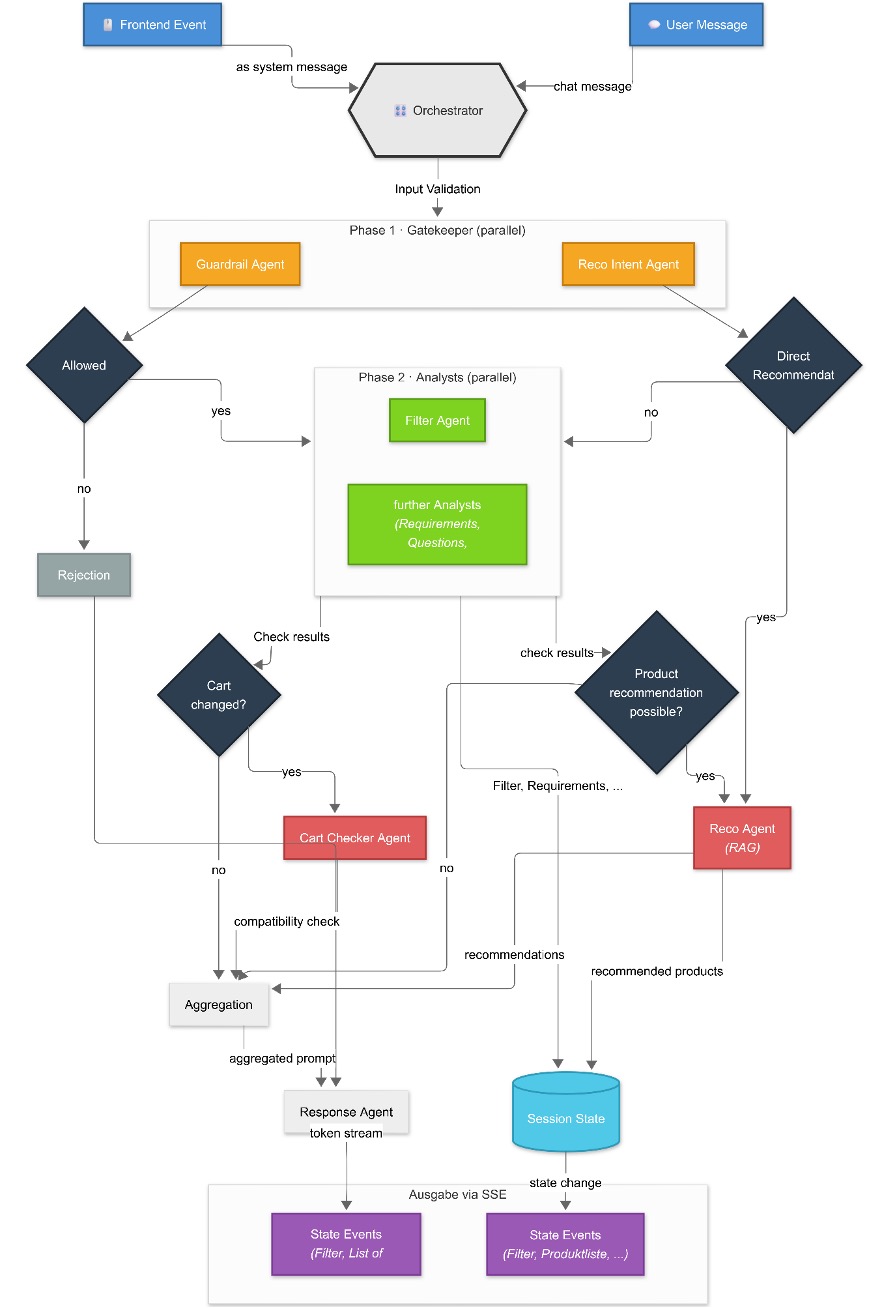

Today we go a step further and dive into the technical implementation of a better solution: a multi-agent system built on the OpenAI Agents SDK. Using a chatbot in a web shop for coffee machines and accessories, we show how dividing responsibilities and establishing close frontend-backend communication creates a significantly more intelligent and helpful advisory experience.

Figure 1 shows the overall process of our system, from user interaction in the frontend through agent orchestration to response delivery via streaming. In the sections that follow, we examine each individual building block in detail.

Figure 1: Architecture

The Architecture: A Team of Specialists

Instead of a single, enormous system prompt that attempts to cover every eventuality, we rely on a team of specialized agents. Each agent has a clearly defined task, its own instructions, and its own tools. Depending on the agent, different language models can be deployed — from small and fast for simple classifications, to large, high-performance models for complex reasoning tasks. A central orchestrator manages these agents, collects their results, and uses them to formulate the final response to the user.

The idea is straightforward: a complex problem is easier to solve when broken down into smaller, manageable sub-problems. Each agent is an expert in its own domain.

Let us take a closer look at the agents and their roles.

1. The Gatekeepers: Input Control

Before the system enters into any substantive consultation, every user input passes through a gatekeeper phase. This phase checks in parallel whether the request is fundamentally eligible for processing and whether a particular processing branch is immediately appropriate.

Example Guardrail Agent: The Guardrail Agent evaluates whether a request is permissible and topically relevant. It filters out inappropriate content, insults, or completely off-topic questions (e.g., “What is the capital of Zimbabwe?”). If the check fails, the process is terminated early and a friendly rejection is formulated.

Alongside this, there are additional gatekeepers, for example, a Reco Intent Agent that recognizes when a user is ready for a product recommendation and can directly trigger the recommendation process.

2. The Analysts: Understanding & Structuring

Once a request has passed the gatekeepers, a set of analyst agents starts up to evaluate the conversation from different perspectives. They typically run in parallel to keep latency low. The goal is to derive structured signals from free text: filters, requirements, knowledge questions, contradictions, the next sensible follow-up question, and so on.

Example Filter Agent: The Filter Agent extracts explicit filter criteria from user input, such as price (“max. €2,000”), manufacturer (“Jura only, please”), or category (“I’m looking for a fully automatic machine”). Via dedicated tools, it can update the session state so that these filters are applied immediately in the web shop interface. The user sees the result instantly in the product list.

In addition, further analyst agents exist, e.g., for requirements, follow-up questions, knowledge-based answers, product attributes, or contradiction detection. They are activated depending on the context.

Based on the analysis, the orchestrator decides whether and which actions should be carried out, for example, generating recommendations, modifying the shopping cart, or performing a consistency check. These agents typically access tools and data sources and return concrete results.

3. The Executors (Action Agents): Actions & Results

Example Reco Agent: When sufficient requirements have been gathered, the Reco Agent generates recommendations using Retrieval-Augmented Generation (RAG): the collected requirements are submitted as a search query against a vector database containing product information. The most relevant results serve as context from which the agent formulates a recommendation grounded in real product data.

Additional action agents exist alongside this, for instance, a Cart Checker Agent that verifies whether selected products are compatible after something has been added to the cart.

The Conductor: The Orchestrator

The heart of the system is the orchestrator. It operates like an agent itself and governs the entire workflow. In simplified terms, the following happens:

- Gatekeeper phase, analysis phase, and decision logic: The orchestrator links the building blocks described above into a coherent flow. It checks incoming requests, lets the appropriate analysts work in parallel, and derives from their output whether a recommendation is already appropriate or whether additional information is still needed.

- Aggregation: The outputs of all agents that have run are merged into a single, clear input prompt. This is handled by a central aggregation function.

- Final response: A final Response Agent receives this aggregated input and formulates the reply to the user. This agent has one task only: to synthesize the information delivered by the specialist agents into a fluent, well-structured, and friendly response. Its instruction prompt contains very strict rules. For example, it is not permitted to invent or add any information that was not supplied by one of the sub-agents.

The Magic Behind the Scenes: Frontend-Backend Communication

A modern e-commerce advisor is more than just a chat window. Users interact with the entire web interface: they click on filters, add products to the cart, or browse detail pages. A truly intelligent chatbot must be able to perceive these actions and respond to them in context.

This is precisely where the strength of our architecture lies — built on a FastAPI backend and Server-Sent Events (SSE).

When Users Don’t Just Type, But Also Click

The classic case is straightforward: the user writes a message, the frontend sends it to the server, the orchestrator runs, and the response is streamed back token by token.

Things become more interesting when the user does not type at all, but instead interacts with the interface, for example, by setting a manufacturer filter, moving the price slider, or clicking “Add to cart”. Each of these actions triggers a call to a dedicated API endpoint in the background.

Let us look at the manufacturer filter as an example. When the user clicks on “Jura” the following happens:

- Session state is modified: The selected manufacturer is saved in the session state as an active filter.

- A system event is generated: A system message is inserted into the chat history, such as: “FrontendEvent: The user clicked to add the manufacturer ‘Jura’ as a filter”

- The orchestrator is launched: From here, the same process runs as with a typed message. The agents can react to the new information, and the chatbot might respond: “Understood, I’m filtering the results by Jura for you. Do you have any further preferences?”

The key trick is that UI interactions are treated like a normal conversation. By injecting a FrontendEvent as a system message into the conversation history, we inform the LLM about what has happened outside the chat window.

Streaming with Server-Sent Events (SSE)

All communication runs via Server-Sent Events. A single, long-lived HTTP connection is used to push data from the server to the client. This allows us to send different types of information in real time.

Concretely, we stream two types of events:

- Message events: Contain the orchestrator’s chat response, which is sent to the client token by token.

- State events: Contain the updated session state, for example, newly applied filters or the list of recommended products that the Reco Agent determined in the background.

The frontend can therefore not only display the chat message live, but also react to state changes and update the product list in real time.

This tight integration of UI interactions and conversational logic makes the chatbot an integral part of the overall user experience.

Lessons Learned

In building our multi-agent system, we gained a number of insights we would like to share:

- Prompts are the most critical code: The system prompts of individual agents require just as much iteration and testing as classical program code. Small changes in wording can drastically alter an agent’s behavior.

- Parallelization pays off, but not everywhere: Running analyst agents in parallel significantly reduced response times. However, dependencies exist (for example, the Reco Agent needs the results of the Filter and Requirements Agents), which make a purely parallel architecture impossible. The key lies in a deliberate separation into independent and dependent phases.

- Less is often more: Not every agent needs to run for every request. Activating agents conditionally based on context — for example, only calling the Cart Agent when the user is talking about their cart — saves costs and reduces noise in the aggregated result.

- Frontend events are powerful, but tricky: The idea of injecting UI interactions as system messages into the chat history works extremely well. But the wording of these events must be chosen carefully so that the LLM interprets them correctly and does not treat them as genuine user input.

- Evaluation remains the biggest challenge: With so many agents, it is difficult to determine which agent is responsible for a good or bad overall result. Developing systematic testing strategies — from isolated agent tests to end-to-end scenarios — is one of the most exciting open tasks ahead.

Conclusion: Managing Complexity Through Specialization

The multi-agent approach presented here is more complex to set up than a monolithic chatbot, but it offers decisive advantages. The underlying principle is one we know from human collaboration: a team of specialists generally achieves better results than individual generalists, because perspectives and strengths complement one another and responsibilities are clearly distributed.

We apply exactly this logic to the chatbot:

- Specialization produces more precise and reliable results.

- Maintainability arises from clearly delineated responsibilities, for example, changes to cart validation only require adjustments to the Cart Checker Agent.

- Model flexibility allows the most appropriate model to be chosen per agent, including local models for sensitive data.

- Control and safety are enhanced through guardrails and strict rules for the final response agent.

This architecture enables us to create a digital advisor that stands up to its human counterpart in every respect, finally offering users the helpful advisory experience they deserve in online retail.

Curious to learn more? At CID, we build exactly these kinds of solutions: tailored to your products, processes, and target audiences. Get in touch. We look forward to hearing about your project.